If you've ever tried to sing “Happy Birthday” on a Zoom call, you already know the problem. What should be a simple, joyful act turns into an agonizing pile-up of voices, each one arriving at a slightly different time, nobody able to find or hold a shared beat. It sounds less like a song and more like a traffic accident at an intersection where all the lights are green.

Most people shrug this off as “just how the internet works.” And they're not wrong—but the why is worth understanding, because it explains not only why Zoom fails for music but why most alternatives are frustrating too, and what a real solution actually requires.

What Is Latency, Exactly?

Latency is the delay between when something happens and when you perceive it. In everyday life, you experience latency constantly—you just don't notice it.

Stand across a football field from someone clapping their hands. You'll see the clap before you hear it, because light travels nearly a million times faster than sound. At 100 yards, the sound takes about a quarter of a second to reach you. That quarter-second gap is latency.

On the internet, latency is the time it takes for data to travel from one computer to another. When you speak on Zoom, your voice is captured by your microphone, converted to digital data, sent across the internet through a chain of routers and servers, received by the other person's computer, converted back to audio, and played through their speakers. Every step adds delay. The total trip typically takes 150 to 300 milliseconds—roughly a fifth to a third of a second.

For conversation, that's fine. Humans are remarkably tolerant of delay in speech. We pause between sentences, we take turns, we fill gaps with “uh-huh” and nods. A 200-millisecond delay in a phone call is barely noticeable. We've been having transcontinental phone conversations for decades without complaint.

Music is a completely different animal.

The 25-Millisecond Threshold

Here's the number that matters: ±25 milliseconds. That's the threshold for ensemble musical performance—the maximum delay between two performers before the human ear perceives them as out of sync. Research in music cognition and acoustics consistently arrives at this figure. Beyond it, musicians sound like they're fighting each other rather than performing together.

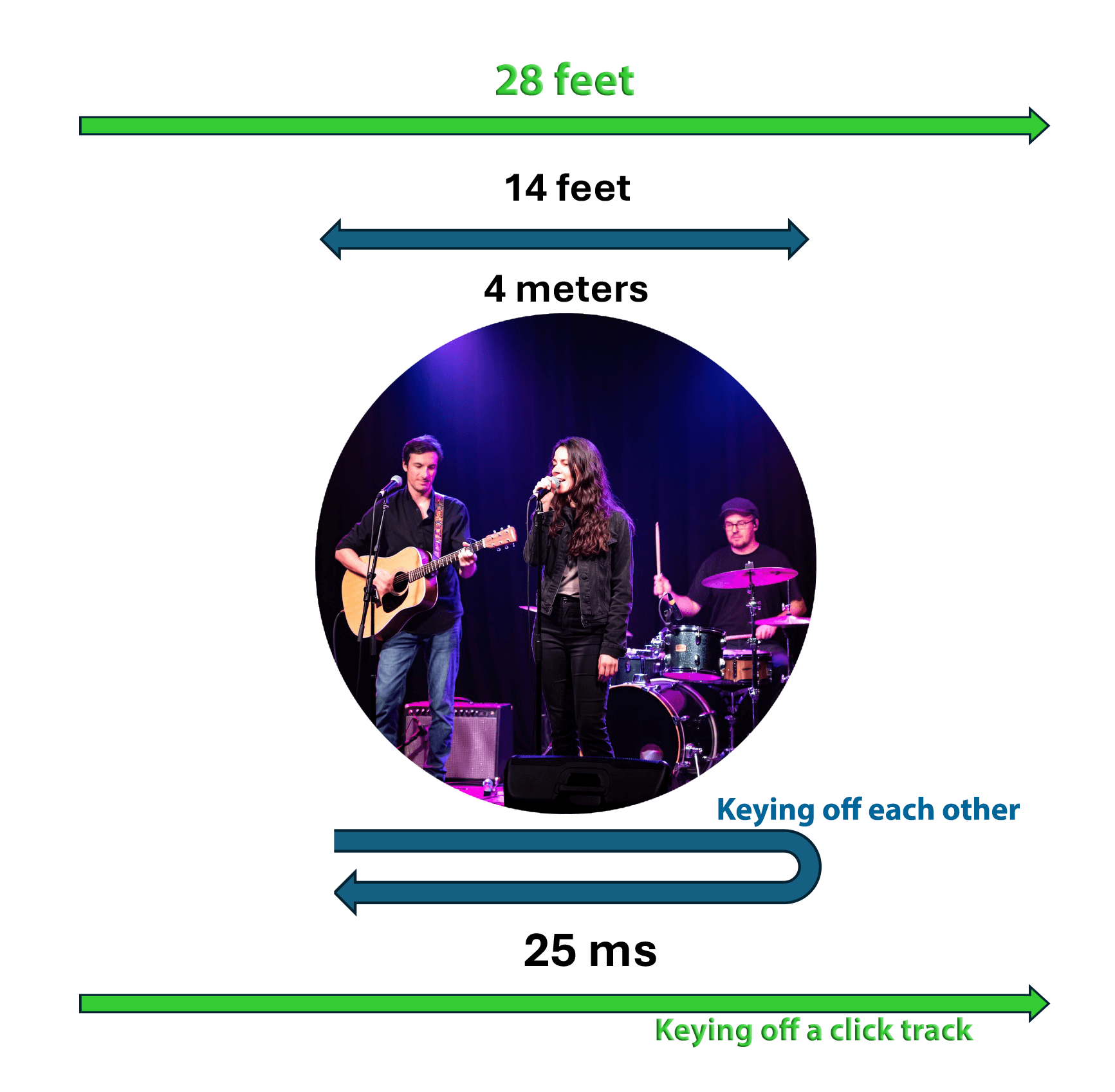

To put 25 milliseconds in physical terms: sound travels about 28 feet in that time. If you and I are standing 28 feet apart in a room and I clap, you hear my clap roughly 25 milliseconds after I make it. At that distance, we can still sing together comfortably. Move much farther apart and it starts to fall apart.

This is why large choirs and orchestras work: even a big concert hall is only about 100 feet deep. The conductor stands in the middle, and the farthest musicians are within a range where the speed of sound still permits tight synchronization. It's also why marching bands at football halftime shows rely on a drum major rather than trying to listen to each other—at those distances, sound delays are already causing problems. One point worth noting: if two people are both listening to the same reference—a conductor, a click track—25 ms means they can be up to 28 feet apart (sound travels one way). But if they're trying to key off each other, the sound must travel there and back. That round trip halves the effective distance to only 14 feet.

Now consider Zoom. A typical video call introduces 150 to 300 milliseconds of latency. That's six to twelve times the ensemble threshold. It's the acoustic equivalent of trying to sing with someone standing 900 feet to a quarter mile away, except worse—because unlike a football field, where the delay is at least constant and predictable, internet latency fluctuates from moment to moment. The delay that was 180 milliseconds a second ago might be 220 milliseconds now and 160 milliseconds in another second. You can't compensate for a target that won't hold still. And it is not just Zoom. All networked video conferencing systems are the same in this sense. One could as easily ask, why can't an ensemble make music on Google Meet? Why can't a chorus sing together on Microsoft Teams? The answer is the same.

That's why Zoom choir rehearsals sound like cacophony. It's not a bug. It's physics.

By the way, Zoom's “Original Sound for Musicians” mode helps with audio quality—it disables the processing that makes voices sound tinny and compressed—but it does nothing for latency. Your voice sounds better while arriving late. It still arrives late.

The Four Approaches to Solving This

Over the past several years, several companies and open-source projects have tackled the latency problem for musicians. Their approaches fall into four broad categories, and understanding them helps clarify what's possible—and what trade-offs each one requires.

Approach 1: Give Up on Real-Time

The most pragmatic response to “you can't sing together in real time over the internet” is to stop trying. This is the virtual choir approach: each person records their part individually, singing along to a conductor's video or a click track, and someone edits the recordings together after the fact.

Eric Whitacre's Virtual Choir project is the most spectacular example—his largest production featured over 17,000 singers from 129 countries. The results are stunning. But nobody is actually singing together at the same time. It's a studio production technique, not a rehearsal or performance tool. It requires significant post-production work and doesn't give performers the experience of hearing themselves as part of an ensemble.

Apps like Smule and Soundtrap work similarly: one person records, then another person sings along with that recording. Fun for casual duets. Not a solution for choir rehearsal or music education.

Approach 2: Fight the Physics

The most technically ambitious approach is to minimize latency to the point where real-time performance becomes possible. JackTrip, originally developed at Stanford's Center for Computer Research in Music and Acoustics (CCRMA), is the standard-bearer here. FarPlay takes a similar approach with a more polished interface.

These platforms transmit uncompressed audio over UDP (a faster but less reliable network protocol), use minimal buffering, and require professional or semi-professional audio hardware with ASIO drivers to shave every possible millisecond from the processing chain. Under ideal conditions—wired ethernet connections, no Wi-Fi, no Bluetooth, geographic proximity, quality audio interfaces—they can achieve 10 to 30 milliseconds of total latency. That's within the ensemble threshold.

The catch: those conditions are demanding. JackTrip's own documentation is admirably candid about this—they recommend wired ethernet because Wi-Fi adds too much jitter, and they note that participants should be in the same geographic region. If you're a conservatory with a dedicated audio network and everyone is in the same city, these tools can work beautifully. If you're a worship team with members on home Wi-Fi in three different states, they probably won't.

Approach 3: Make It Accessible Enough

Jamulus and SonoBus represent a middle ground: they accept somewhat higher latency in exchange for easier setup and broader compatibility. Jamulus focuses on accessibility for amateur musicians—it works with consumer audio interfaces and maintains a network of public servers. SonoBus is even more tolerant of poor network conditions, working over Wi-Fi and even on mobile devices.

The trade-off is that typical latencies land in the 50 to 100 millisecond range—past the ensemble threshold for tight vocal harmony, though workable for some forms of instrumental jamming where the beat is more flexible. These are genuinely good tools for the right use case. They're not the answer for synchronized choral singing.

Approach 4: Accept the Delay and Compensate

JamKazam takes perhaps the most creative conventional approach: it acknowledges that latency exists and gives users tools to manually compensate for it. Musicians can configure delay offsets, play along with guide tracks, and record their parts separately while seeing each other on video. It's a hybrid of real-time and asynchronous techniques.

The challenge is that manual compensation is fiddly. Latency changes from session to session (and sometimes during a session), so you're constantly adjusting. It requires a level of technical understanding that many musicians—particularly volunteers on a worship team or students in a classroom—don't have and shouldn't need.

What All of These Have in Common

Despite their differences, every approach described above shares one fundamental assumption: the goal is to get performers close enough to real-time that they can synchronize with each other. They differ in how much latency they'll tolerate and what trade-offs they'll accept, but they're all fighting the same battle against the same physics.

This is where it's worth stepping back and asking a different question. What if the goal isn't to make the internet fast enough? What if the goal is to make the speed of the internet irrelevant?

A Different Kind of Solution

This is the approach we took when we built Lyrekos. Instead of trying to minimize latency, we eliminate the need for real-time synchronization entirely.

The key insight is that ensemble performers don't always need to hear each other in real time. What they need is a shared temporal reference—something that keeps everyone locked to the same beat. In a concert hall, that reference is the sound of the ensemble itself, traveling at the speed of sound across a manageable distance. On the internet, that distance is unmanageable. But a backing track—played from the cloud to each participant independently—can serve as that shared reference regardless of distance.

Each singer hears the same backing track and sings along with it. Each singer's audio is recorded with precise timing information relative to that track. Our system then computationally aligns all the performances, compensating automatically and dynamically for whatever delays the internet introduced. The result is a synchronized ensemble recording with accuracy under 20 milliseconds—well within the threshold for perceptible ensemble tightness.

This means the things that constrain every other solution simply don't apply:

- Wi-Fi works fine. So does Bluetooth.

- Distance doesn't matter. We've tested across six time zones.

- No special hardware. Consumer headphones and your laptop microphone work.

- No software to install. Everything runs in your browser.

- No manual configuration. Synchronization is automatic.

The trade-off is that Lyrekos is designed for structured performance—singing with a backing track or a pre-recorded reference—not for spontaneous free-form jamming. If you want to improvise a jazz session where everyone responds to each other in real time, you need one of the real-time tools (and you need to be geographically close, on wired ethernet, with professional audio gear). If you want your worship team to rehearse a hymn on Tuesday night without everyone driving to church, or your choir students to practice their parts together from their dorm rooms, Lyrekos was built for exactly that.

Another comment I sometimes get: Lyrekos is not as good as being in the same room. True—if you can be in the same room, you should do so.

As I described in our origin story, this idea came from the same frustration everyone experienced during COVID—trying to sing together on Zoom and discovering it was impossible. The difference was that instead of trying to make Zoom faster, we asked whether we could sidestep the problem entirely. It turned out we could.

Why This Matters Now

COVID may be behind us, but the need for remote musical collaboration isn't. Worship teams still have members who travel, who are homebound, who serve multiple campuses. Music teachers still have students who can't always be in the same room. Choir directors still want to hold midweek sectional rehearsals without asking everyone to drive across town.

The pandemic didn't create the need for synchronized remote music. It revealed how deep that need was.

If you're curious about how Lyrekos works in practice, our post on teaching music online walks through a real lesson sequence. For a look at all the ways the platform can be configured, see Lyrekos’ flexible building blocks. And if you want to see and hear it working, watch our first video demo—four singers across six time zones, perfectly synchronized.

Join our community if you'd like to be among the first to try it.

Lance Glasser

Lance is CEO and Co-founder of Kinetic Audio Innovations. He was previously a faculty member at MIT, Director of Electronics Technology at DARPA, and CTO at KLA. He also makes sculpture, which has nothing to do with audio but explains the hundreds of pounds of bronze in his house.

Ready to Sing Together?

Join our community to be among the first to experience synchronized remote music performance.

Join our CommunityTry it